The Online Safety Act is now law

Follow VerifyMy on LinkedIn to keep up to date with the latest safety tech and regulatory developments.

The Online Safety Act has received Royal Assent and is now law, bringing a renewed focus on how online services can provide a safer life online for children and adults in the UK.

An 18-month deadline for the newly appointed internet regulator, Ofcom, is now in place to create a series of codes of conduct, which the Secretary of State will then present to Parliament. As and when those codes are approved a few weeks later, each element of the new regime covered by a code comes into force.

Ofcom expect industry to work with them, and it expects change. Specifically, it will be focused on ensuring that online services:

- have stronger safety governance so that user safety is represented at all levels of the organisation – from the Board down to product and engineering teams;

- design and operate their services with safety in mind;

- enable users to have more choice and control over their online experience; and

- are more transparent about their safety measures and decision-making to promote trust.

Where Ofcom identify compliance failures, it can impose fines of up to £18m or 10% of qualifying worldwide revenue (whichever is greater).

The strictest requirements apply to content that promotes terrorism and Child Sexual Abuse Materials. While these have long been illegal, the Act provides much stronger enforcement mechanisms, particularly for sites hosted abroad, as Ofcom will be able to demand that Internet Service Providers block access to sites that break this new law.

"These new laws give Ofcom the power to start making a real difference in creating a safer life online for children and adults in the UK. We've already trained and hired expert teams with experience across the online sector, and today we're setting out a clear timeline for holding tech firms to account.

"Ofcom is not a censor, and our new powers are not about taking content down. Our job is to tackle the root causes of harm. We will set new standards online, making sure sites and apps are safer by design. Importantly, we'll also take full account of people's rights to privacy and freedom of expression. We know a safer life online cannot be achieved overnight; but Ofcom is ready to meet the scale and urgency of the challenge."

Dame Melanie Dawes, Ofcom's Chief Executive

How can VerifyMy help?

VerifyMy’s mission is to provide frictionless, trustworthy solutions for online platforms to maintain their integrity, protect their reputation, and safeguard their customers. We do this by offering two solutions: VerifyMyAge & VerifyMyContent.

Age verification and estimation with VerifyMyAge

The highest profile requirement is to implement “highly effective” age assurance of the sort offered by VerifyMy’s age assurance solution, VerifyMyAge, to prevent children from being exposed to “primary priority harms”, which fall into four categories; suicide and self-harm information, dangerous dieting advice and pornography.

Identity verification and content moderation with VerifyMyContent

Services will also be required to spot many forms of harmful content on their platform in order to either remove it altogether or to prevent children from seeing it and offer the new opt-out to adults. VerifyMy's content moderation solution, VerifyMyContent, provides this business-critical moderation through both automated scanning and human moderation. The risk is particularly acute when users create their own content, for example, where participant consent is necessary, and in live streaming and live chat, where real-time monitoring is necessary.

What’s next?

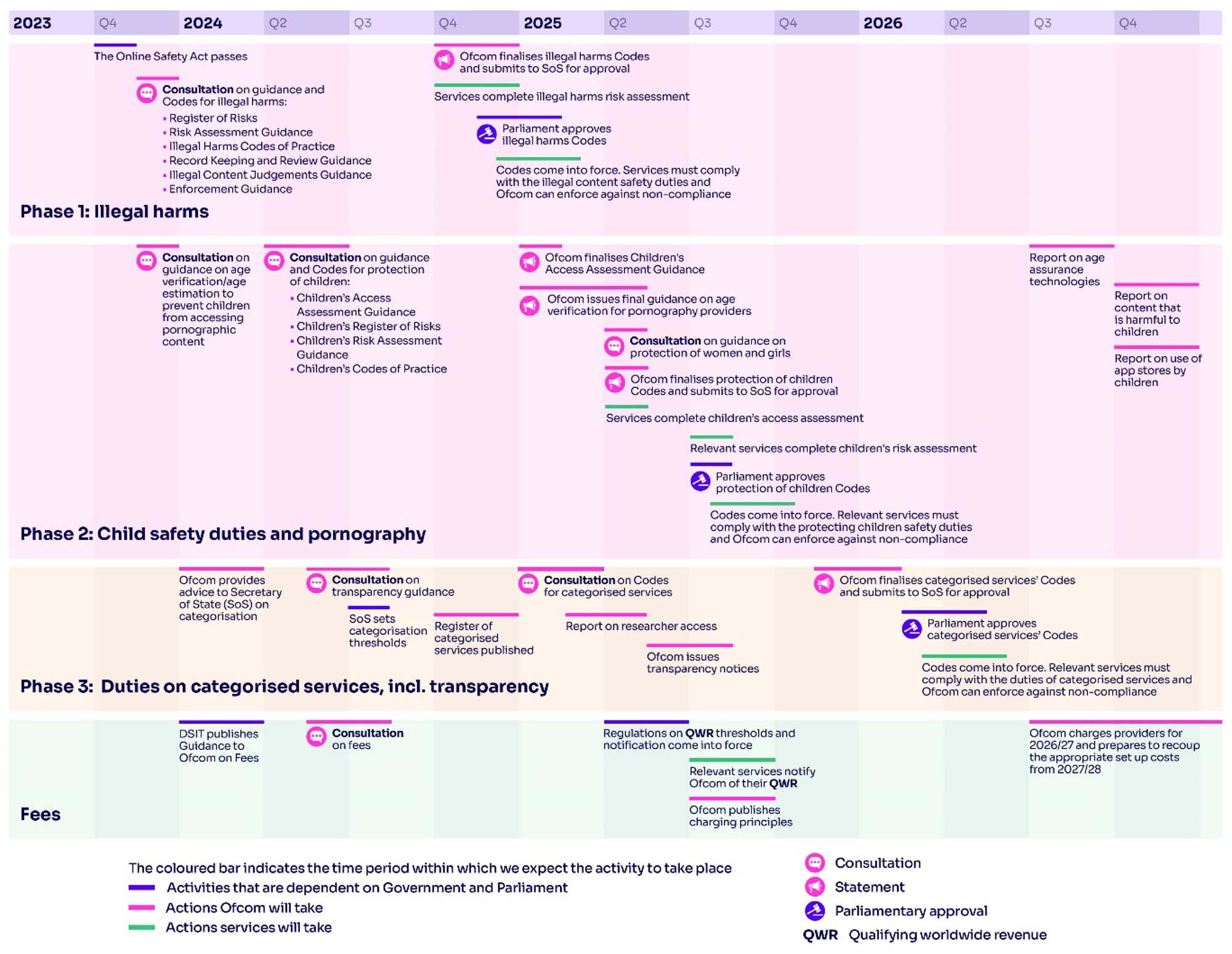

The new laws will roll out in three phases as follows, with the timing driven by the requirements of the Act and relevant secondary legislation:

Phase one: illegal harms duties

Ofcom will publish its first consultation on illegal harms – including child sexual abuse material, terrorist content and fraud – on 9 November 2023. This will contain proposals for how services can comply with the illegal content safety duties and draft codes of practice.

Phase two: child safety, pornography, and protecting women and girls

Ofcom's first consultation, due in December 2023, will set out draft guidance for services that host pornographic content. Further consultations relating to the child safety duties under the Act will follow in Spring 2024, while we will publish draft guidance on protecting women and girls by Spring 2025.

Phase three: transparency, user empowerment, and other duties on categorised services

These duties – including publishing transparency reports and deploying user empowerment measures – apply to services which meet certain criteria related to their number of users or high-risk features of their service. Ofcom will publish advice to the Secretary of State regarding categorisation, and draft guidance on their approach to transparency reporting in Spring 2024.

Subject to the introduction of secondary legislation setting the thresholds for categorisation, Ofcom expect to publish a register of categorised services by the end of 2024.

Further proposals, including a draft code of practice on fraudulent advertising and transparency notices, will follow in early and mid-2025, respectively, with Ofcom’s final codes and guidance published around the end of that same year.

Image source: Ofcom

Our advice to all user-to-user services (the scope of the law, plus adult content sites) is to begin planning how to comply immediately, ensuring those plans are clearly documented, and to be as transparent about them as possible without undermining their operational effectiveness.

Please reach out to us to find out how we can help you comply with the Online Safety Act.